Your sales team gets 150 leads a month. Every lead gets the same treatment — same follow-up email template, same call priority, same spot in the queue. The rep who picks up the phone first gets the lead, whether it’s a $50K opportunity from a VP at a growing company or a college student researching vendors for a class project.

Your team knows these leads aren’t equal. Your best rep can glance at a lead and tell you in five seconds whether it’s worth a call. But that instinct stays in one person’s head — there’s no system to scale it.

Meanwhile, the high-value leads that come in at 4pm on Friday sit in the same queue as everything else. By Monday morning, they’ve already talked to your competitor.

This is the problem lead scoring solves. Not with a $50K platform or a team of data scientists — with a systematic approach that matches the size and data volume you actually have.

Your Sales Team Is Guessing

Here’s the math that should bother you.

If 20% of your leads are genuinely qualified — and that’s typical for inbound — your team is spending 80% of their selling time on leads that were never going to convert. At a staffing agency placing technical contractors, one missed high-quality lead represents $15-50K in lost placement revenue. At a SaaS startup, one churned enterprise lead is $20-100K in annual contract value.

The irony: your team isn’t lazy. They’re working hard. They’re just working on the wrong leads because there’s no signal telling them which ones matter most.

Every sales methodology says “qualify first.” But without a scoring system, “qualification” means a 15-minute discovery call with every lead to figure out if they’re worth pursuing. At 150 leads/month, that’s 37 hours — almost a full workweek — just to sort the pipeline.

Lead scoring does that sorting automatically. Before the call, before the email, before a rep invests a single minute.

What Lead Scoring Actually Is (and Isn’t)

Lead scoring assigns a number to each lead based on how likely they are to become a customer. Higher score = higher priority. Your sales team works the list from the top down.

It’s not magic. It’s a systematic way to do what your best sales rep already does intuitively — read the signals and decide who to call first. The difference is that a scoring system does it consistently, for every lead, at any volume, without relying on one person’s gut feeling.

Traditional Scoring vs AI Scoring

Traditional (rule-based): You define the rules. “Job title is VP or higher → +20 points. Company has 50+ employees → +15 points. Downloaded our case study → +10 points.” You set the criteria and weights. The system applies them mechanically.

Pros: works with any amount of data, easy to understand, you control the logic. Cons: reflects what you think predicts conversion, not necessarily what actually does. Requires manual updating as your business changes.

AI (predictive): The model learns from your historical data which signals actually predict conversion. Maybe “visited pricing page twice within 3 days” is a stronger buying signal than job title for your specific business. Maybe leads from LinkedIn convert at 3x the rate of leads from paid ads. The AI finds patterns across your data that you wouldn’t spot manually.

Pros: discovers non-obvious patterns, improves automatically as new data comes in, more accurate at scale. Cons: needs historical data to learn from (at least 200+ conversions), can feel like a black box, requires initial investment.

The key difference: Traditional scoring reflects your assumptions. AI scoring reflects your data. At small scale with limited history, they can be equally effective. But as your data grows, AI pulls ahead because it adapts without you manually re-weighting criteria every quarter.

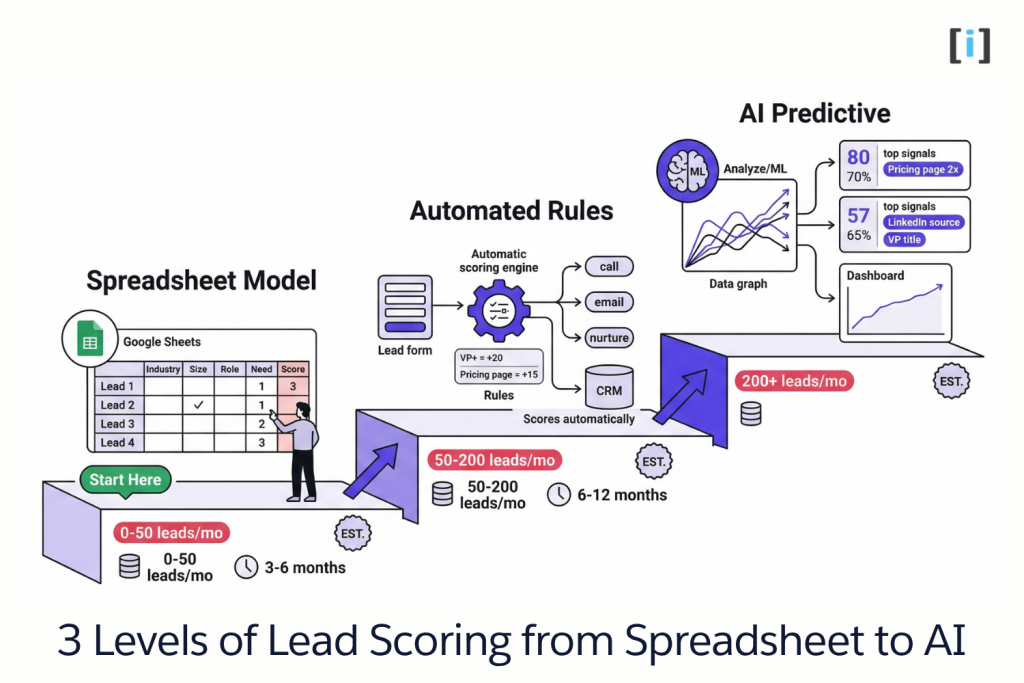

The Scoring Progression (Start Here, Graduate Up)

This is the framework nobody else talks about. You don’t jump straight to AI — you build up to it.

Level 1 — The Spreadsheet Model (0-50 leads/month)

Start here. Seriously.

Build a scoring matrix in Google Sheets with 5-7 criteria that your best sales rep uses to prioritize leads:

| Criteria | 1 Point | 3 Points | 5 Points |

|---|---|---|---|

| Company size | 1-10 employees | 11-50 | 50+ |

| Role | Individual contributor | Manager | Director/VP/C-suite |

| Industry fit | Outside target | Adjacent | Core industry |

| Stated need | Vague/browsing | General interest | Specific problem described |

| Source | Cold/paid ad | Organic/content | Referral/direct |

| Engagement | Form only | Downloaded resource | Replied to email/called |

| Timeline | “Just exploring” | “Next 6 months” | “This quarter” |

Maximum score: 35. Score every lead manually — at this volume, it takes 2 minutes per lead.

Why this is the right starting point: It forces you to articulate what “qualified” actually means for your business. Most teams can’t — they just “know it when they see it.” A spreadsheet model turns instinct into criteria. And those criteria become the foundation for everything that follows.

Use this for 3-6 months. Track which scores actually converted. You’ll start seeing patterns — and you’ll have the data for Level 2.

Level 2 — Automated Rule-Based Scoring (50-200 leads/month)

Same scoring model, but now it runs automatically. When a lead enters your CRM, the system pulls available data — form fields, company info, behavior signals — and calculates a score without a human touching it.

How it works:

- New lead submits form → automation fires

- System checks: company size (from form or enrichment), role (from form), source (UTM tracking), engagement (page visits, downloads) → calculates score

- Lead routes based on score: >25 = call within 2 hours (high priority). 15-25 = personalized email sequence (warm nurture). <15 = automated nurture campaign (long-term).

Your CRM probably has basic scoring built in — even free tiers of HubSpot, Pipedrive, and Zoho support rule-based scoring. If yours doesn’t, a simple automation workflow or custom script does the job.

Why not jump straight to AI: At this volume, you don’t have enough conversion data for a machine learning model to learn meaningful patterns. A well-built rule-based model with 7 good criteria will outperform a half-trained AI model every time. Don’t let anyone sell you AI scoring when you have 6 months of data and 80 conversions.

Use this for 6-12 months. You’re now building the historical dataset — scored leads, conversion outcomes, time-to-close, deal values — that AI will learn from in Level 3.

Level 3 — AI-Powered Predictive Scoring (200+ leads/month)

Now the fun starts. You’ve got 6-12 months of data: which leads converted, which didn’t, what scores they had, what signals were present.

An AI model analyzes this history and identifies the actual predictive signals — not what you assumed mattered, but what statistically predicts conversion for your business.

Common surprises teams discover:

- “We weighted company size heavily, but leads who visited our pricing page twice convert at 4x regardless of company size.”

- “Referral source is 3x more predictive than job title.”

- “Leads who come through LinkedIn campaigns and engage with a case study within 48 hours have a 40% close rate — triple our average.”

The AI model scores new leads automatically and recalibrates as new data comes in. It gets more accurate over time, not less — because every closed-won and closed-lost outcome teaches it something.

Key Takeaway: The progression matters. Level 1 defines your criteria. Level 2 automates and routes. Level 3 optimizes with data. Skip to Level 3 without the foundation, and you’re training an AI model on garbage data.

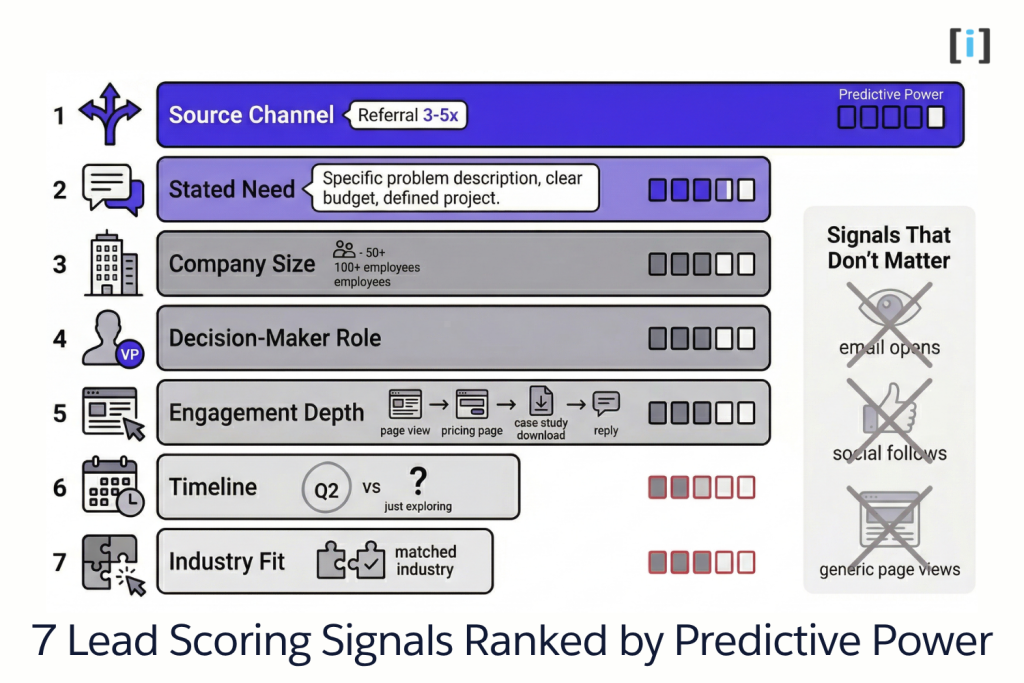

What Signals to Score (For Small Teams)

Enterprise articles list 30+ scoring signals. You don’t have the data for that. Focus on these seven — ranked by typical predictive power for small teams.

The 7 Signals That Matter Most

1. Source channel. Where the lead came from is often the single strongest predictor. Referrals typically convert 3-5x higher than paid ads. Organic search converts differently than social. Track source religiously — it’s free data with outsized predictive value.

2. Stated need. Did they describe a specific problem? “We need help automating our client onboarding” is a buying signal. “Just checking out your website” isn’t. If your form has a free-text field, pay attention to it.

3. Company size or revenue indicator. Not because bigger is always better — but because it predicts budget capacity. A 3-person startup might love your service but can’t afford it for 18 months.

4. Role and decision-making authority. A VP filling out your contact form is different from an intern researching vendors. This doesn’t mean ignore individual contributors — but weight accordingly.

5. Engagement depth. A lead who visited your homepage is different from one who visited your pricing page, downloaded a case study, and came back the next day. Depth of engagement signals intent.

6. Timeline indicator. “Looking to start in Q2” vs “just exploring for next year.” Urgency predicts conversion velocity — and conversion velocity predicts whether the deal actually closes or dies in committee.

7. Industry fit. Do they match an industry you’ve successfully served? If your last 10 closed deals were staffing agencies and SaaS companies, a new lead from those industries has built-in social proof and reference potential.

Signals That Don’t Matter as Much as You Think

Email opens. Too noisy. Apple Mail privacy features pre-load images, inflating open rates. An “open” doesn’t mean they read it.

Social media follows. Vanity metric. Following your LinkedIn page doesn’t correlate with buying intent.

Raw page view count. 15 page views sounds engaged — unless they were bouncing around your blog for a school project. Weight which pages (pricing, case studies) over how many pages.

Pro Tip: When building your Level 1 spreadsheet, start with just the top 4 signals. Add signals 5-7 after a month when you have a feel for what’s differentiating your best leads. Starting with too many criteria creates scoring fatigue and inconsistency.

What It Costs: Platform vs Custom

Let’s do real cost math at small-team scale — not enterprise.

Platform AI Scoring

HubSpot Professional: $800/month. Includes predictive lead scoring plus the full marketing platform. If you’re already on HubSpot, this is a no-brainer — turn on the feature you’re paying for. If you’re not on HubSpot, you’re adopting a $9,600/year platform to get one feature.

Salesforce + Einstein: Base Salesforce ($25-300/user/month) plus Einstein scoring ($50/user/month). For a 5-person sales team: $375-1,750/month. Powerful, but you’re paying enterprise prices for a small-team problem.

Standalone scoring tools (MadKudu, Clearbit Reveal, 6sense): $500-2,000/month. Often require minimum lead volumes to be effective. Many are built for PLG SaaS companies, not services businesses.

3-year total at small scale: $28,800-$63,000+ depending on platform and team size. And your scoring model only works inside that platform.

Custom AI Scoring

Build cost: $12,000-$25,000 one-time. Includes: data analysis, scoring model trained on your conversion history, CRM integration, lead routing automation, and a simple dashboard.

Hosting + maintenance: $300-800/month for cloud infrastructure, periodic model retraining, and system updates.

3-year total: $22,800-$53,800. You own the model. It runs on your infrastructure. It works with any CRM. If you switch from Pipedrive to HubSpot next year, your scoring model comes with you.

The Honest Comparison

At enterprise scale (1,000+ leads/month, dedicated marketing ops team), platforms win on convenience and ecosystem integration.

At small-team scale (100-500 leads/month), custom and platform costs are comparable — but custom gives you ownership and flexibility.

The practical advice: If you’re already paying for HubSpot Professional or Salesforce with Einstein, use their built-in scoring first. It’s included in what you’re paying. If you’re not on those platforms — and don’t want to adopt one just for scoring — custom is often cheaper than a platform subscription you’ll only use for one feature.

The Litmus Test: Are you considering a $800/month platform primarily for lead scoring? A $15K custom build pays for itself in 19 months and you own it forever. Are you already on that platform? Use what you’ve got.

A Real Example: AI Lead Scoring for a Staffing Agency

Abstract frameworks are useful. Concrete results are better.

The Problem

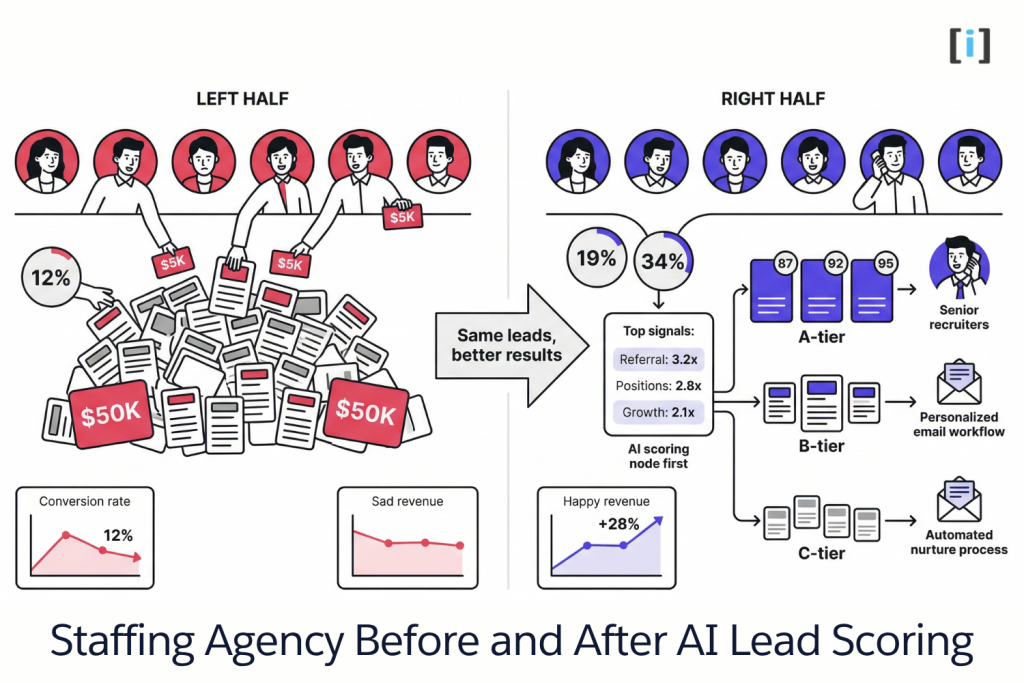

An 8-person staffing agency placing technical contractors. They receive 200-300 leads per month from job boards, their website, referrals, and LinkedIn outreach.

All leads went into a shared CRM queue. Reps cherry-picked based on gut feeling and whoever they recognized. The VP’s referral from a Fortune 500 company sat in the same queue as a one-time contract request from a 3-person startup.

Result: Reps spent equal time on $5K opportunities and $50K opportunities. No prioritization. No routing. Blended close rate: 12%.

The Scoring Model

We built a custom scoring model using 8 months of their CRM data — 1,800 leads with full outcome tracking (won, lost, no response, disqualified).

The AI identified the signals that actually predicted conversion for this specific agency:

| Signal | Predictive Power |

|---|---|

| Source: referral | 3.2x more likely to close |

| Positions: 3+ | 2.8x (multi-hire = serious buyer) |

| Company growth rate | 2.1x (actively hiring companies close faster) |

| Decision-maker title (VP+) | 1.9x |

| Industry: tech or finance | 1.7x (agency’s strongest verticals) |

Some surprises: company size (a common default criterion) barely mattered — small companies hiring aggressively converted better than large companies with slow processes. And “urgency” language in the initial inquiry (“need someone by Q2”) was a stronger signal than any demographic factor.

The Routing

Score buckets determined the response:

- A-tier (85+): Immediate call from senior recruiter. Target response time: 30 minutes.

- B-tier (60-84): Personalized email from assigned recruiter + scheduled call within 24 hours.

- C-tier (<60): Automated nurture sequence — monthly value emails, quarterly check-ins.

The Results

First quarter after implementation:

- A-tier close rate: 34% (up from 12% blended)

- Overall close rate: 19% (58% improvement)

- Revenue per rep: +28% — not from more leads, but from working the right leads first

- Average time-to-close for A-tier: 18 days (down from 34 days blended) — faster because high-intent leads got immediate attention instead of sitting in a queue

The model also revealed that their C-tier leads weren’t worthless — they just needed a longer nurture cycle. Two C-tier leads that entered the automated nurture eventually converted 4-5 months later into their two largest placements of the quarter.

Real Talk: This agency had 8 months of clean CRM data with outcome tracking before we built the AI model. If they’d come to us with 3 months of data and no conversion tracking, we’d have started them at Level 2 (automated rule-based scoring) and built the AI model later. Honest scoping matters more than impressive technology.

FAQ

How many leads do I need for AI lead scoring to work?

For rule-based scoring (Levels 1-2), any volume works — even 20 leads/month benefits from systematic prioritization. For AI predictive scoring (Level 3), you need at least 6-12 months of historical data with 200+ tracked conversions. Below that, your model doesn’t have enough examples to learn meaningful patterns.

Can I use AI lead scoring with my existing CRM?

Yes. Custom scoring models connect to any CRM with an API — Salesforce, HubSpot, Pipedrive, Zoho, Close, even Airtable or Google Sheets. Platform-native scoring (HubSpot Predictive, Salesforce Einstein) only works within those specific platforms.

How long does it take to build a lead scoring model?

Level 1 spreadsheet: 1-2 days. Level 2 automated rules: 1-2 weeks to build and integrate with your CRM. Level 3 AI predictive model: 4-6 weeks including data preparation, model training, CRM integration, routing setup, and testing with live leads.

Does AI lead scoring eliminate the need for sales reps?

No — and anyone saying it does is selling you something. AI tells your reps which leads to call first and why. It doesn’t make the call, build the relationship, or close the deal. Think prioritization tool, not replacement. Your best rep’s instincts plus AI data produces the highest conversion rates.

What if my scoring model is wrong?

Expected. No model is perfect on day one. Rule-based models need quarterly review — check whether your criteria still match reality. AI models retrain automatically on new conversion data. The key isn’t a perfect model at launch — it’s launching, measuring, and iterating. A mediocre scoring model that routes leads by priority still outperforms no scoring at all.